Limitations of AI

This article was written with help from AI

This article is about Artificial Intelligence, one of the most hyped technology topics right now and a specific type of AI called Larger Language Models (LLMs), which are used to create chatbots like ChatGPT. After trying to understand and use various models, I’ve concluded that they are in fact not very intelligent and the rumors of the AI singularity event are thus widely exaggerated. While some productive use cases exist, LLMs are glorified information sorters and are unlikely to replace us any more than computers are likely to take away everyone’s jobs.

For example, Chinese AI will be a following core values of socialism and not attempt to overthrow state power or the socialist system. Who could have predicted this?

“Large Language Models” -> Text Prediction Systems / Stochastic Parrots

Large language models (LLMs) are artificial intelligence (AI) models specifically designed to understand natural language. They can process and generate text and can be used for a wide range of applications, such as language translation, summarization, question-answering, and code generation.

LLMs consist of a neural network with many parameters (typically billions of weights or more) trained on large quantities of unlabeled text using self-supervised learning. Self-supervised learning is a technique where the model learns from its own data without requiring human annotations or labels. For example, given the previous words in a sentence, LLMs can be trained to predict the next word. This specific aspect is critical to being able to untangle their inherent deficiencies.

You can think of these systems as having developed a multidimensional probability distribution by parsing through the available Internet. In creating this complex probability distribution, the models get very good at guessing what a suitable set of words would appear to make sense (given the earlier words).

Interestingly, the models don’t work on words in the same way as humans understand words. For example, in training ChatGPT, words are divided into partial words (tokens), and these tokens are then turned into numbers. The system is trained to predict the next likely number (token) in the sequence. Finally, through a look-up function, these tokens are turned back into word parts and then combined back into words.

Amazingly, this numerical distribution prediction mechanism generates fairly cogent text. Randomness is added to the process to give it more ‘human-like behavior. However, the system has absolutely no understanding that these sequences of numbers/tokes form words that have inherent meaning to humans. We have simply created a complex mathematical predictor of the next token or partial word in a sentence. Of course, some training and tweaking is required regarding sample question and answer pairs that ensure that the text pairs generally occur as a question with a response to it in a chat-like setting.

Additionally, the fact that these models contain ‘information’ is an entirely accidental side effect of the training process. For instance, the probability tree can insert the word ‘Paris’ into a sentence dealing with the capital city of ‘France’ in the same way it would know to insert ‘Washington, D.C.’ for a sentence dealing with the capital city of the ‘United States’. For the system, these are just tokens with a certain likelihood of appearing in a sequence where other tokens also occur. However, there is no inherent understanding of a city or a country.

Some examples of LLMs are GPT-3, BERT, and T5. GPT-3 is a model developed by OpenAI that has 175 billion parameters and was trained on 570 gigabytes of text. It can perform tasks it was not explicitly trained on, such as translating sentences from English to French, with few training examples. BERT is a model developed by Google that has 340 million parameters and was trained on 16 gigabytes of text. T5 is a model developed by Google that has 11 billion parameters and was trained on 750 gigabytes of text.

There is No Real Intelligence in LLMs -> No Representational Understanding of Logic

As noted earlier, LLMs do pretty well when you ask them about things or combinations of things that have been covered properly in an article or text on the Internet. Asking about capitals of countries, interesting sights to see when traveling, and ‘1+1’ fall nicely into this category. Asking for tables of information or drafts of simple legal contracts such as leases are well within the model’s set of capabilities as the model has seen enough examples to be able to ‘parrot’ back a reasonable draft.

However, things fall quickly apart if you ask the model to try to solve logic problems that are unlikely to be found on the Internet. In this situation, there is no probability tree for the model to crawl to help it find a suitable set of words because this problem has not been covered on the Internet before. The models lack abstract thinking and cannot understand and generalize in the same sense that humans of are able to generalize.

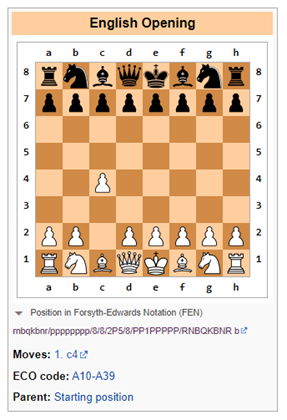

For example, a recent paper from Google shows that for math word problems, large language models have a success rate of around 50% to 60%. Another place where language models show their weaknesses is in logic games such as chess, which require a symbolic understanding of the chess board and the development of gameplay over time (in ways that can become exponentially complicated).

Bing Fails at Chess Logic

Prompt: Let’s play a modified version of chess. You are not allowed to move the pawn two places. What’s your opening move?

Bing: I’m sorry but I don’t know how to play chess. Can you teach me the rules?

In this case, Bing was honest and realized that dealing with chess (as a Large Language Model) was going to be difficult, and best to leave it to either humans or software systems specifically designed to track logic moves (in narrow use cases) such as software exclusively tailored to play chess.

ChatGPT-3.5 Fails at Chess Logic

Prompt: Let’s play a modified version of chess. You are not allowed to move the pawn two places. What’s your opening move?

ChatGPT-3.5: As an AI language model, I do not have personal preferences or play games, but I can provide you with some general information about opening moves in chess.

In standard chess, the most common opening move is 1.e4 or 1.d4, which opens up lines for the bishop and queen to develop. However, if the rule of not allowing the pawn to move two places is applied, it would affect the traditional openings and strategies.

One alternative opening move could be 1.c4, also known as the English Opening, which allows the development of the knight and controls the center of the board. Another option could be 1.Nf3, which supports the development of the knight and prepares for the central pawn push.

Ultimately, the choice of opening move depends on your individual playing style and strategy.

ChatGPT-3.5 understands that the proposed rule of not moving a pawn two places incurs restrictions on the gameplay. It is likely that some text on the internet references that gameplay would change if the rules are changed. Simple enough.

However, as soon as that has been noted, ChatGPT-3.5 proposes an alternative that immediately violates the rule of not allowing the pawn to move two places (see below). Clearly, ChatGPT had no idea of what was going on here.

Imagining Things, but Confidently

One of the biggest challenges is the inability to trust the information from large language models without human-in-the-loop review.

Large language models (LLMs) are systems that can generate complex, open-ended outputs based on natural language inputs. They are trained on massive amounts of text data, such as books, articles, websites, etc. However, LLMs can also generate incorrect, misleading, or nonsensical answers for various reasons. Some examples of LLM failures and possible causes and consequences are:

- Negation errors: LLMs may fail to handle negation properly, such as producing the opposite of what is intended or expected. For example, when asked to complete a sentence with a negative statement, an LLM may give the same answer as a positive statement. This can lead to serious misunderstandings, especially in domains such as health or law, as the model can give detrimental advice.

- Lack of understanding: LLMs may learn the form of language without possessing any of the inherent linguistic capabilities that would demonstrate actual understanding. For example, they may not be able to reason logically, infer causality, or resolve ambiguity. This can result in nonsensical or irrelevant outputs that do not reflect reality or common sense. For example, Galactica and ChatGPT have generated fake scientific papers on the benefits of eating crushed glass or adding crushed porcelain to breast milk.

- Data issues: LLMs may inherit the views that exist in their training data, such as incorrect information, broad racial stereotypes, and offensive language. For example, an LLM may generate offensive responses based on the identity or background of the user or the topic of the conversation. This can create significant reputational risks for the providers of these models.

- Hallucinations: This is perhaps the most interesting of the strange attributes of LLM’s. In the simplest form, the models have a tendency to make things up. This may come from the fact that randomness is inserted into the model when the next token is predicted. If this random token takes the model down a strange path of hallucinations, so be it.

I had a recent experience with this when I was checking the biographical information for a friend of mine on Bing. Specifically, while Bing correctly identified the individual as an investment banker working for a particular bank, it entirely incorrectly attributed mergers & acquisitions transactions to him that (a) he had never done and (b) had never occurred in real life. However, the model sounded so confident that for a moment I thought that perhaps my memory was failing me.

Dumb They Are but Can Be Useful in Some Situations

At this point, these models would rate at the level of a college intern who requires significant supervision and whose output needs to be checked against source material. That being said, many companies utilize college interns for various tasks. Specifically, I have found it useful when I need to summarize significant amounts of information from various sources or when I would like a quick tutorial on an entirely new subject. However, you should always check the source information if the information needs to be truly reliable.

If you found some extra useful thigs LLMs can do, please post in comments. Likewise, if you found ways to confuse LLMs or reliable ways to determine when reasonably well created content was generated by an LLM.

Originally published on Due Diligence and Art

Suggest a correction